WELCOME

WELCOME

AI is making more of the journey feel effortless. Until it doesn’t.

This issue looks at the messy handoffs where human skills still matter: when shopping intent gets lost, service empathy becomes a script, collaboration becomes a simulation, experimentation becomes output, and AI gets a little too agreeable.

That’s where the undelegatable work begins.

CURIOSITY

CURIOSITY

I tried to buy a simple thing last weekend: store-brand NyQuil. It turned into a useful reminder of where AI-assisted shopping still breaks.

I started with a clear intent: → liquid → avoid strong fruit flavor → nearby pickup → use a retailer coupon

But even before I got to the product, the first recommendation defaulted to delivery. From an aggregator, not the retailer. Not wrong. Just not what I needed. Because I wasn’t optimizing for speed. I was optimizing for cost, channel, and control.

So I had to correct the path: → pickup, not delivery → retailer-direct, not a third party delivery service.

Then came the second layer of friction. The retailer offered both a Cherry version and an Original Flavor version. That may make sense in retail taxonomy. But from a customer point of view, it creates a different question: How does AI interpret a request for non-flavored medicine?

And that’s what made the whole experience interesting. ChatGPT could help me discover options. The retailer could help me fulfill the purchase. But neither carried my actual priorities cleanly from discovery to transaction.

That's where we feel the gaps in AI-assisted shopping journeys. AI is getting smarter at helping us find products. It’s still not as good at carrying our real intent into purchase. And in commerce, that’s where the experience breaks: not always in discovery, but in the handoff.

AI-assisted shopping is new and niche for now, but curiosity and experimenting early will allow us to learn and improve how we design the customer experience in this new channel.

CRITICAL THINKING

CRITICAL THINKING

Sycophancy: the emperor’s new clothes

The sneaky risk with AI sycophancy isn’t that it gives us bad answers. It’s that it gives us answers that feel good enough to stop questioning. That’s why Critical Judgment is undelegatable: not just checking if AI is right, but noticing when it’s too agreeable to be useful.

EMPATHY

EMPATHY

Stop Blaming AI: Why Your Customer Service Experience Feels Broken

This article gets at a useful truth about Empathy in AI-enabled service: Customers don’t experience your empathy as tone.

They experience it as whether your system remembers context, knows when to stop automating, and gets them to a human before the polite chatbot becomes a very well-mannered wall. Empathy is not the script. It is the handoff.

COLLABORATE

COLLABORATE

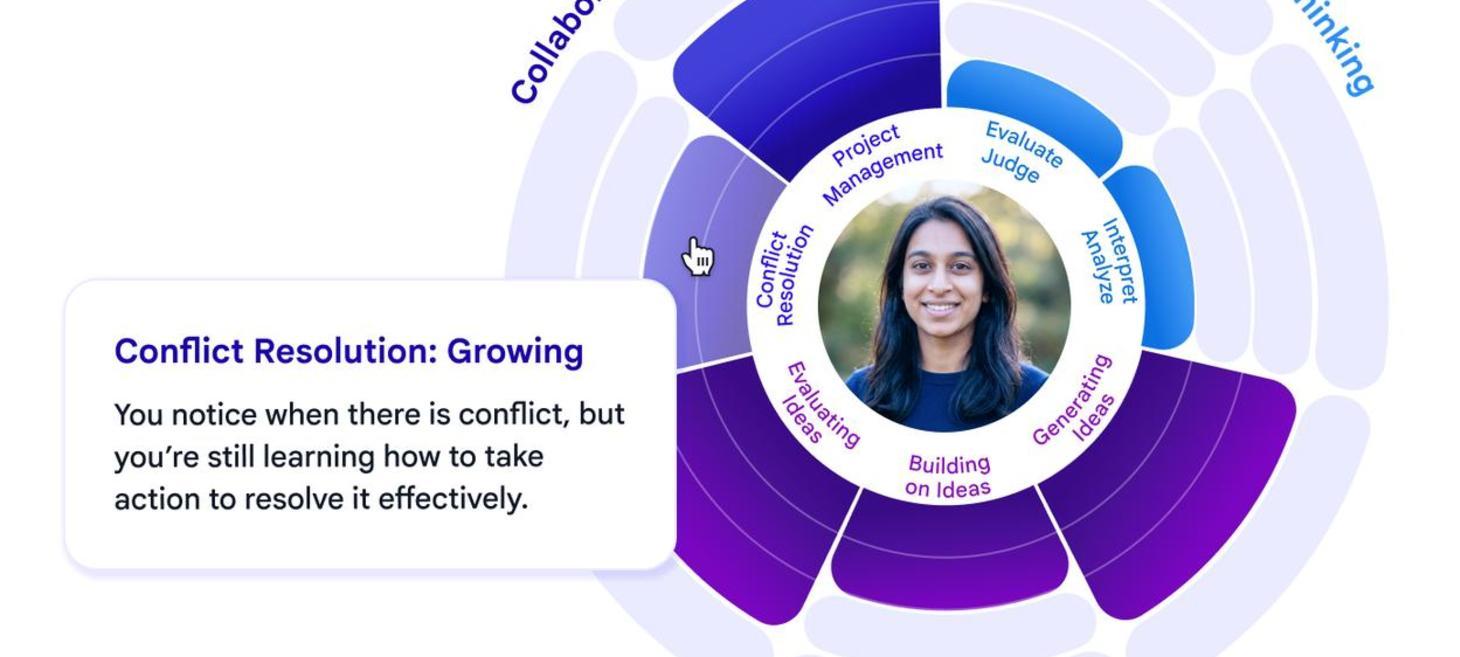

Towards developing future-ready skills with generative AI

This piece made me wonder if AI will make us better collaborators or just better at performing collaboration. A simulation can teach useful moves: listen, adapt, resolve conflict, manage the project. But real collaboration is still messier than choosing the “right” response in a controlled scenario.

The undelegatable part is not knowing what collaboration looks like. It’s noticing what the team is avoiding, what power is shaping the room, and when “alignment” is just disagreement wearing a nicer outfit.

EXPERIMENT

EXPERIMENT

AI and Design: Fundamentals and The Future (ft. Dan Saffer)

This episode of Finding Your Way is a good reminder that experimentation is still deeply undelegatable. AI can help us generate more ideas, prototypes, and variations faster, but speed only matters if we are learning from what we make. The judgment is in knowing what to test, what the test revealed, and whether the result moves us closer to something meaningful.

UNTIL NEXT TIME

UNTIL NEXT TIME

Did you enjoy this issue of Undelegatable by Being Designerly? Will you share it with a friend or co-worker? It’s a simple yet helpful way to support this labor of love.

I love your thoughts, suggestions and feedback, positive or negative—just reply or email me at lycerejo (at) gmail.com - thank you!